|

Intel are selling SGX today, have built new features on top of it, it works fine and all their competitors have been investing heavily into catching up. > Intel essentially gave up on SGX because they couldn't make it work USB signing devices aren't really consumer hardware, are they? I don't recall vulns in HSMs being a major source of leaks previously, but I'm sure the game will move there sooner or later. > that type of consumer hardware has been consistently riddled with vulnerabilities

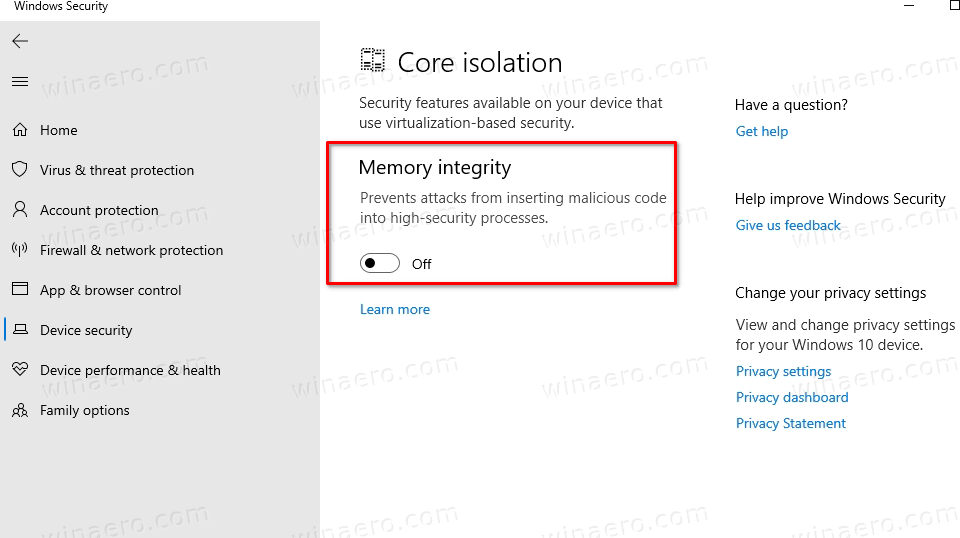

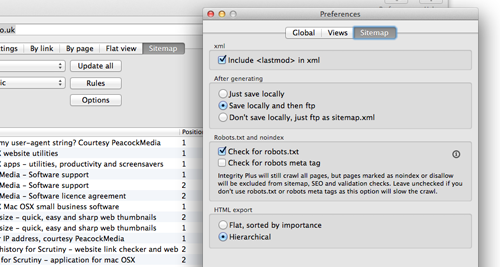

Every time you increase the complexity of the scheme the chance for mistakes goes up. If it was that easy to hide your tracks police would never catch criminals, but they do. So you're going to find these people anonymously, now? How? You'll have to ask a lot of people before someone agrees to actually set up a fake company for you, you'll also have to find ways to pay them without losing your anonymity, and they will have to explain at some point to the tax authority where this unexpected income came from and what the company they've set up actually does. But that is opening a big can of worms (WoT, etc.). But in general there is a strong incentive for developers to properly protect their signing keys, because Apple could ban them by not signing their keys in the case of repeated issues.Ĭode signing substantially increases platform security and as a 16 year macOS user, I would not want to go back to pre-signing days, where you could never be sure whether an application bundle was compromised, unless you'd verify the archive/disk image with GnuPG. They can also increase the burden of proof for creating a developer account if it becomes more common. (1) does not seem to happen often, if it does happen, Apple can revoke the certificate. A non-malicious entity's certificate can be compromised. A malicious entity can sign up for a developer account.Ģ. Signed application bundles remove many attack vectors. Only Apple can hand out certificates and certificates are at the very least associated with payment info (though sometimes they want more, DUNS number or whatever). MacOS by default doesn't run unsigned or incorrectly signed apps, period.

This isn't to say that either kind of certificate is necessarily ideal for all of the different uses to which relying parties end up putting it nowadays, but just that what they're attesting to, and how you would verify it, is pretty different.Įdit: There seems to be a longer discussion about related points in this thread already at This is much more expensive to verify usefully, although maybe some governments will eventually have a way to automate it. But a code signing certificate would supposedly go further and confirm that it's apparently controlled by someone acting on behalf of a certain named legal person existing in a certain jurisdiction.

which isn't always complied with, and which, following increased pressure from European privacy law, is often not visible to the public.)Ī Let's Encrypt certificate would confirm that a certain key is apparently controlled by someone who apparently also controls a certain DNS name. DV certificates for HTTPS are trying to attest to control of a name in the DNS, which is verifiable by automated technical means, and which is not necessarily related to offline identity. The usual answer is that code signing certificates are (supposedly) trying to attest to a legal identity in the hope of being able to punish people offline if they publish malware, or allow people or organizations to have a policy about only installing software known to be from a certain list of publishers. People have been asking Let's Encrypt itself for this on the Let's Encrypt forum since the project was founded. I would love to see a LetsEncrypt style service for OSS but I assume it's against the core interests of Microsoft / Apple to allow something like this as it would start to drive people away from the walled gardens of the app stores.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed